NVIDIA DGX Spark

Personal AI Supercomputer for the Enterprise

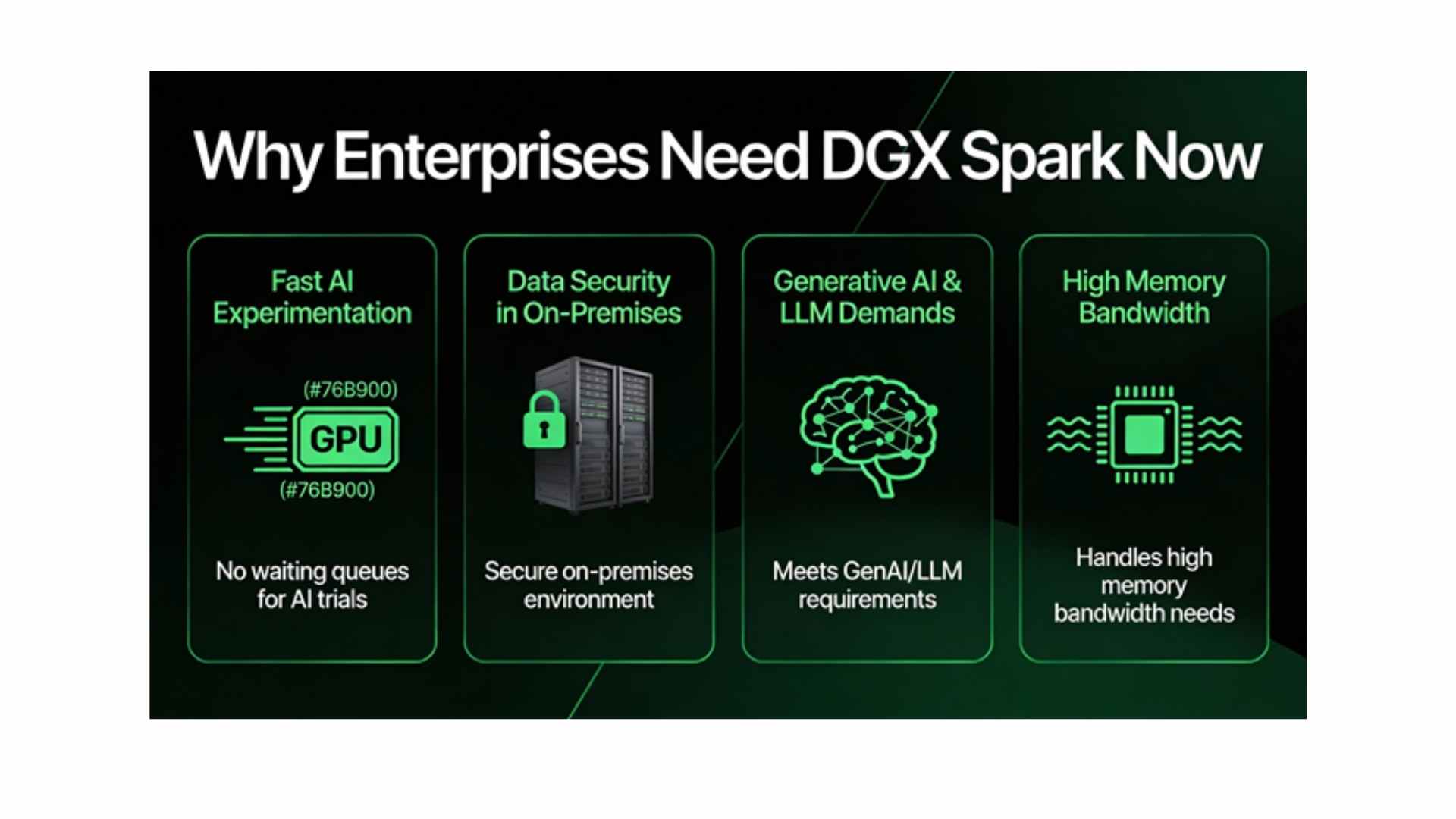

Why DGX Spark Now

- Enterprise AI teams need fast, secure experimentation without waiting for shared cluster capacity.

- Data-sensitive workloads require on-prem, controlled environments.

- Generative AI, LLMs, and RAG patterns demand high memory bandwidth and FP4/FP8/FP16 compute.

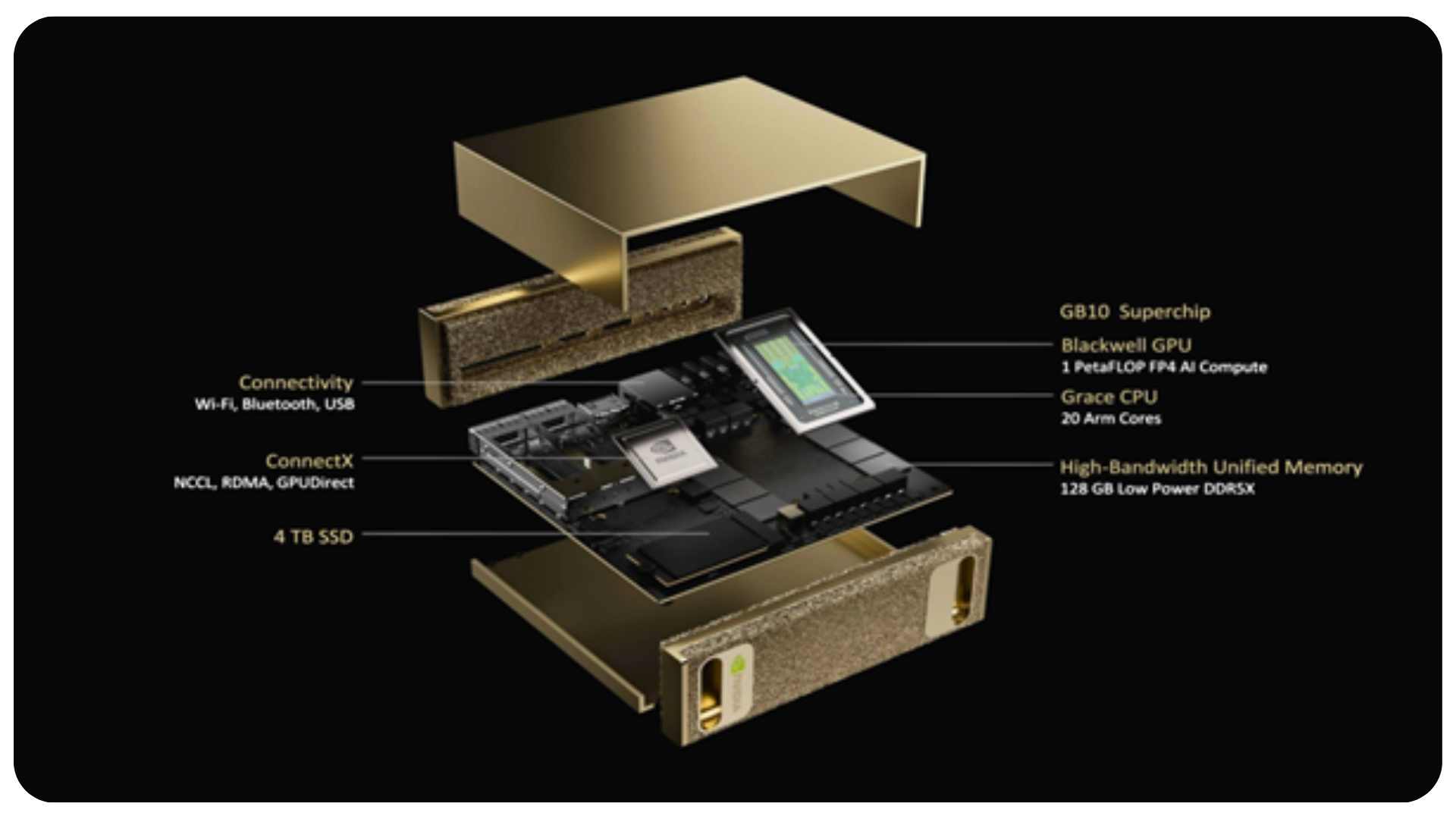

Grace Blackwell Architecture

Desktop-class AI supercomputer with 128 GB unified memory and 1 PFLOP peak compute for production-grade workloads.

Enterprise-Ready Performance

Fine-tuning, LLM inference, and multimodal model development locally—without cloud dependencies.

Ecosystem Integration

Consistent APIs with DGX Cloud and full DGX systems for seamless workload scaling and portability.

On-Premises Control

Deploy confidently in regulated environments with full data governance and secure, isolated inference.

What Is DGX Spark

- A personal AI compute system for advanced model development, tuning, and inference.

- Combines Grace CPU and Blackwell GPU with unified memory.

- Bridges workstations and full DGX infrastructure with consistent APIs.

- Optimized for generative AI, LLM exploration, and RAG pipelines.

Core Technical Capabilities (1/2)

- High-performance compute supporting FP4, FP8, and FP16 precision.

- Unified system memory architecture for large-parameter models.

- Grace Blackwell platform with high bandwidth and low latency.

- Optimized thermals and power design for production-grade workloads.

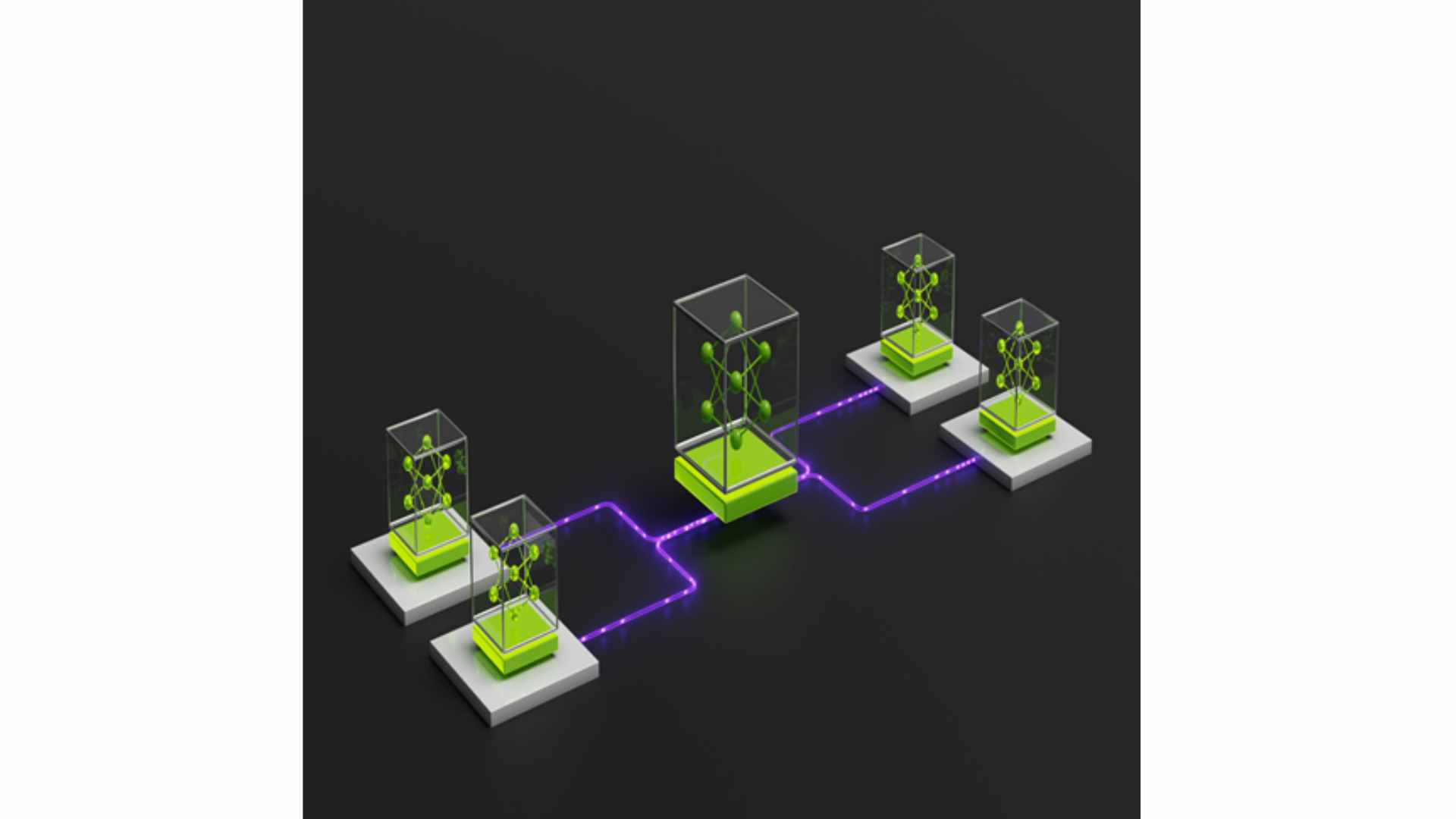

- Scales from single DGX Spark to paired configurations.

- High-speed interconnects and enterprise-grade I/O.

- Supports containerized and virtualized AI workloads.

- Validated to run NVIDIA AI Enterprise and DGX OS.

Target Workloads & Use Cases (1/2)

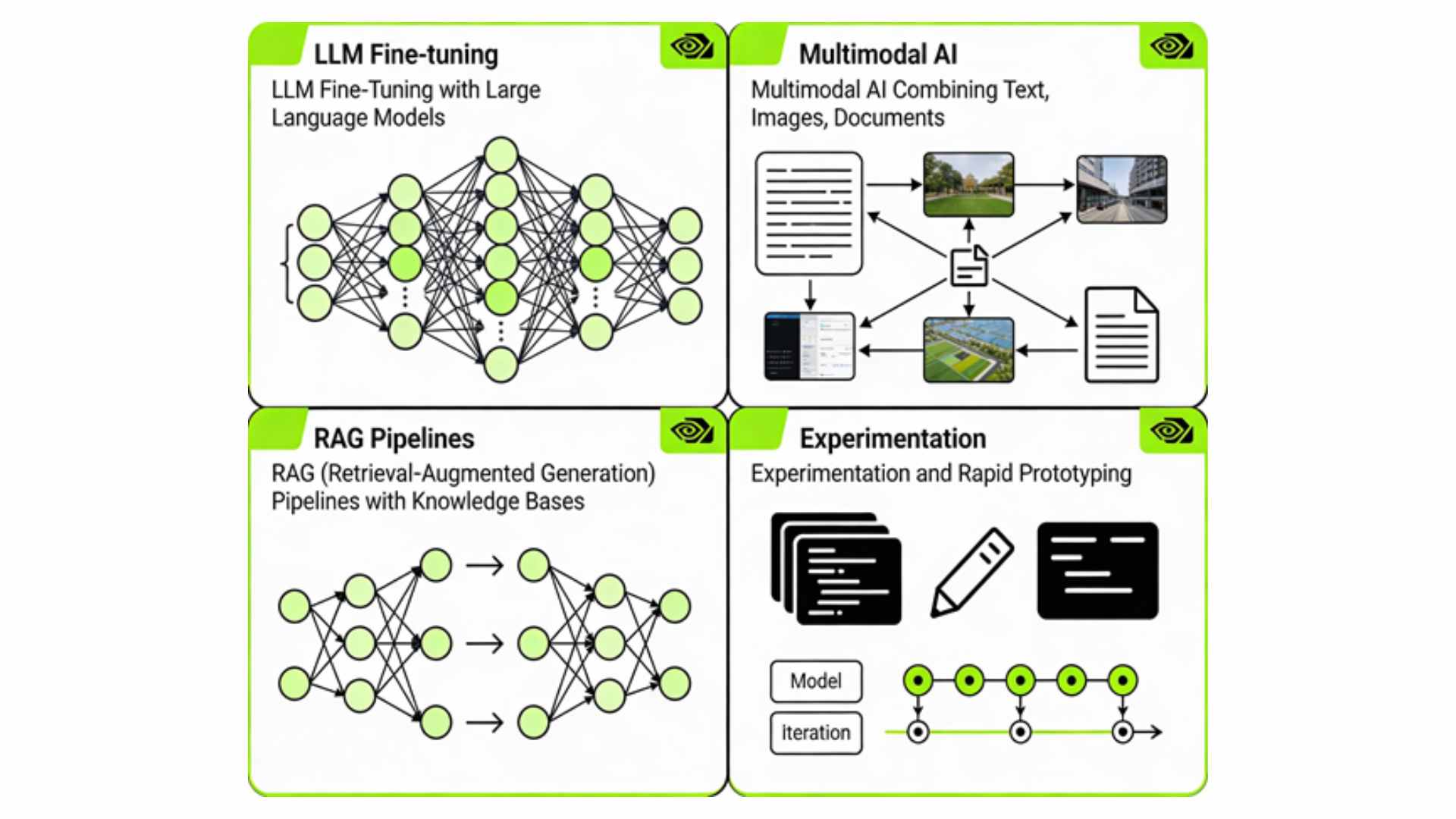

- LLM fine-tuning for domain-specific assistants.

- Multimodal development combining text, image, and documents.

- RAG pipelines for knowledge-heavy use cases.

- Experimentation sandboxes for rapid validation.

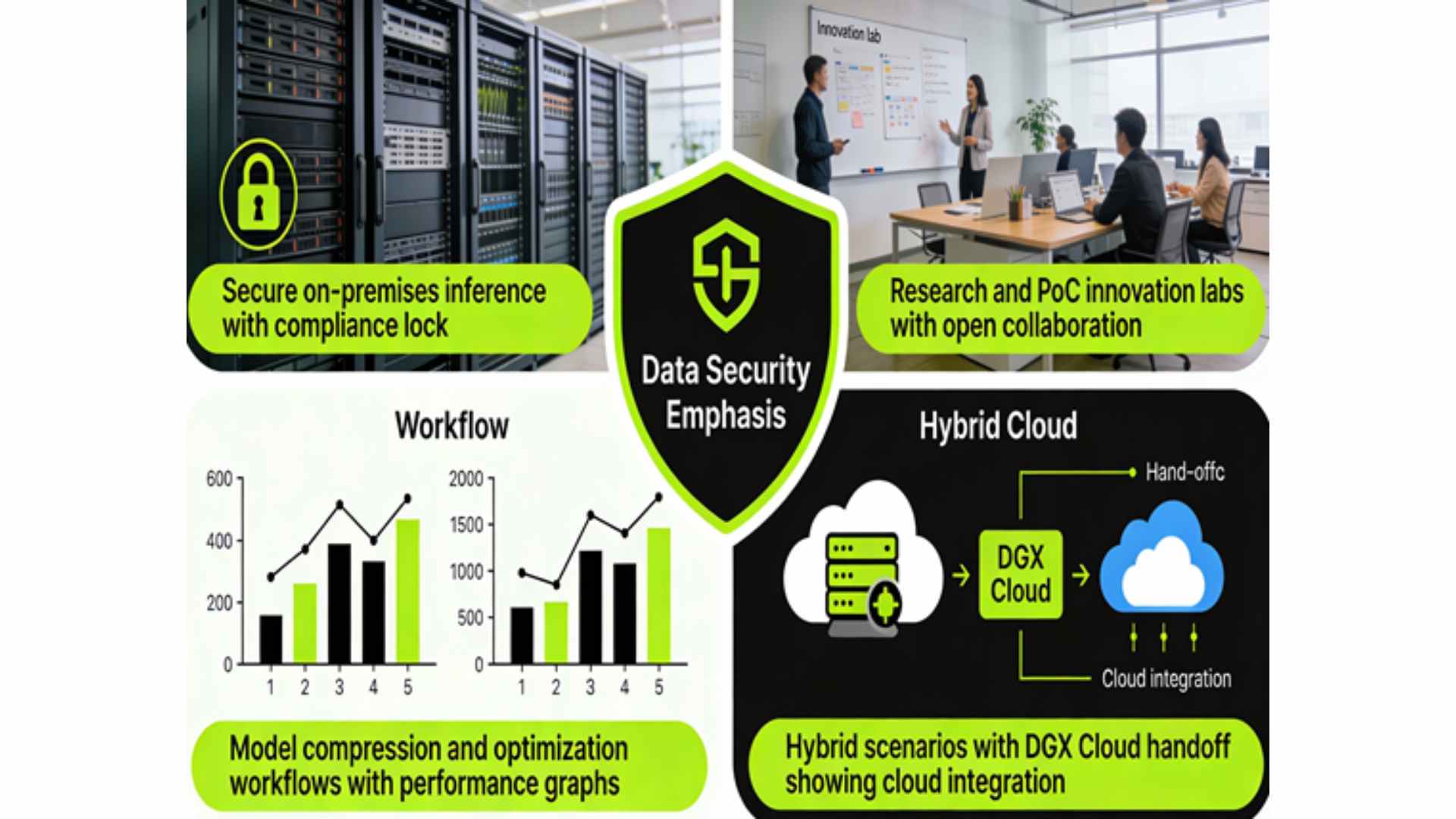

- Secure on-prem inference for regulated environments.

- Research and PoC platforms for innovation labs.

- Model compression and optimization workflows.

- Hybrid scenarios with DGX Cloud handoff.

Core Runtime

DGX OS • CUDA 12.x • cuDNN 9.0

AI Frameworks

PyTorch • TensorFlow • JAX

Optimization

TensorRT • NeuralEngine • Triton

Container & Models

NGC Registry • Docker • Kubernetes

Triton

Deployment & Integration Models

- Single-node for individual engineers.

- Paired deployments for heavier models.

- Integration with corporate networks and controls.

- Foundation for broader DGX footprint.

- NVIDIA-validated hardware and software stack.

- Long-term firmware, driver, and software support.

- Micropoint-led deployment and optimization services.

- Training and enablement for engineering teams.

Adoption Path & Scaling Journey

Timeline / Roadmap Visual

Value for Enterprise Clients

- Shorter experimentation cycles and faster path from PoC to production.

- Improved data governance and compliance via on-prem, controlled AI infrastructure.

- Higher developer productivity with a standardized, high-performance AI workstation platform.

- Lower risk, future-ready investment that aligns with NVIDIA’s DGX and Grace Blackwell roadmap.

Transform Your AI Capability

DGX Spark: Personal Supercomputer. Enterprise-Ready. Now.

Ready to Accelerate

Pilot deployment in days, not months. Validate on your data, your infrastructure, your terms.

Next Steps

Schedule a technical consultation to discuss your AI initiatives and custom DGX Spark configuration.

Expert Partnership

Micropoint + NVIDIA ensures seamless deployment, optimization, and ongoing support.

Our Partners

Power Your Enterprise AI with NVIDIA DGX Spark

Talk to our team and discover the future of accelerated computing.